Top 5 Large Language Models You Must Know

Revolutionizing natural language processing with large language models

Natural language processing (NLP) is a field of artificial intelligence that focuses on enabling computers to understand, interpret, and generate human language. In recent years, there has been a significant increase in the development of large language models for NLP. These models are trained on vast amounts of text data and are able to perform a wide range of tasks, often with human-like accuracy. In this article, we will introduce five large language models that have made significant progress in the field and have the potential to revolutionize a wide range of applications.

GPT-3 (Generative Pre-training Transformer 3) - Developed by OpenAI, GPT-3 is one of the largest and most powerful language models to date, with billions of parameters. It can generate human-like text and perform a wide range of natural language processing tasks, including translation, summarization, and question-answering. One of the key innovations of GPT-3 is its ability to perform zero-shot learning, meaning it can perform a new task without any explicit training on that task.

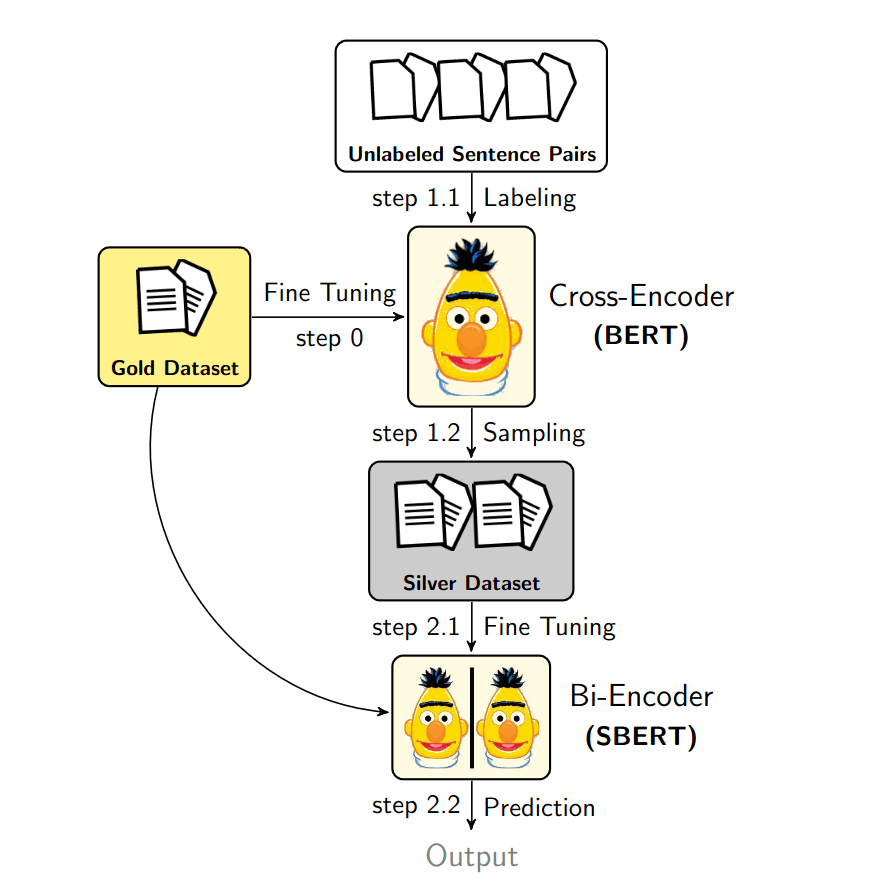

BERT (Bidirectional Encoder Representations from Transformers) - BERT is another large language model developed by Google that has achieved state-of-the-art performance on many natural language processing benchmarks. It is particularly effective at tasks that require understanding of the context in which a word is used, such as natural language inference and named entity recognition. BERT is trained using a technique called masked language modeling, in which the model is required to predict the missing words in a sentence given the context of the remaining words.

XLNet - XLNet is a large language model developed by Google that is trained to predict words in a sentence given the context of the entire document, rather than just the context of the sentence. This allows it to achieve better performance on many natural language processing tasks, especially those that require a global understanding of the document. XLNet is trained using a technique called permutation language modeling, in which the model is required to predict the next word in a sentence given a permuted version of the sentence.

RoBERTa (Robustly Optimized BERT Pretraining Approach) - RoBERTa is a variant of BERT that was trained on a large dataset with a more robust training regime, which allows it to achieve improved performance on many natural language processing tasks. RoBERTa uses the same masked language modeling technique as BERT, but with a few modifications to the training process to further improve performance.

T5 (Text-To-Text Transfer Transformer) - T5 is a large language model developed by Google that is trained to perform a wide range of natural language processing tasks using a single model. It is trained to take a prompt as input and generate a natural language response, and can perform tasks such as translation, summarization, and question answering. T5 is trained using a technique called text-to-text generation, in which the model is required to generate a response given a prompt in the form of natural language text.

In conclusion, large language models have made significant advances in the field of natural language processing in recent years. These models, which are trained on vast amounts of text data, are able to perform a wide range of tasks with human-like accuracy. Some of the most well-known large language models include GPT-3, BERT, XLNet, RoBERTa, and T5. These models have achieved state-of-the-art performance on many benchmarks and have the potential to revolutionize a wide range of applications. As the development of large language models continues to progress, we can expect to see even more impressive results and further advancements in the field of natural language processing.